|

|

3

https://ccp.cloudera.com/display/CDHDOC/CDH3+Installation

https://ccp.cloudera.com/display/CDHDOC/HBase+Installation

https://ccp.cloudera.com/display/CDHDOC/ZooKeeper+Installation

https://ccp.cloudera.com/display/CDHDOC/CDH3+Deployment+on+a+Cluster

-install

http://archive.cloudera.com/redhat/cdh/cdh3-repository-1.0-1.noarch.rpm

http://archive.cloudera.com/redhat/6/x86_64/cdh/cdh3-repository-1.0-1.noarch.rpm

rpm -ivh cdh3-repository-1.0-1.noarch.rpm

yum --nogpgcheck localinstall cdh3-repository-1.0-1.noarch.rpm

rpm --import http://archive.cloudera.com/redhat/cdh/RPM-GPG-KEY-cloudera

--hadoop

yum search hadoop

yum install hadoop-0.20 hadoop-0.20-native

yum install hadoop-0.20-<daemon type> #namenode|datanode|secondarynamenode|jobtracker|tasktracker

--hbase

yum install hadoop-hbase

yum install hadoop-hbase-master

yum install hadoop-hbase-regionserver

yum install hadoop-hbase-thrift

yum install hadoop-hbase-rest

--zookeeper

yum install hadoop-zookeeper

yum install hadoop-zookeeper-server

-config

--hadoop

alternatives --display hadoop-0.20-conf

cp -r /etc/hadoop-0.20/conf.empty /etc/hadoop-0.20/conf.cluster

alternatives --install /etc/hadoop-0.20/conf hadoop-0.20-conf /etc/hadoop-0.20/conf.cluster 50

alternatives --set hadoop-0.20-conf /etc/hadoop-0.20/conf.cluster

cd /data/hadoop/

mkdir dfs && chown hdfs:hadoop dfs

mkdir mapred && chown mapred:hadoop mapred

chmod 755 dfs mapred

hadoop fs -mkdir /data/hadoop/temp

hadoop fs -mkdir /mapred/system

hadoop fs -chown mapred:hadoop /mapred/system

core-site.xml:

<property>

<name>fs.default.name</name>

<value>hdfs://namenode:9000</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/data/hadoop-${user.name}/</value>

</property>

hdfs-site.xml:

<property>

<name>dfs.data.dir</name>

<value>${hadoop.tmp.dir}/dfs/data</value>

</property>

<property>

<name>dfs.block.size</name>

<value>134217728</value>

</property>

mapred-site.xml:

<property>

<name>mapred.job.tracker</name>

<value>jobtracker:9001</value>

</property>

<property>

<name>mapred.child.java.opts</name>

<value>-Dfile.encoding=utf-8 -Duser.language=zh -Xmx512m</value>

</property>

<property>

<name>mapred.system.dir</name>

<value>/mapred/system</value>

</property>

--zookeeper

#!/bin/sh

ZOO=/usr/lib/zookeeper

java -cp $ZOO/zookeeper.jar:$ZOO/lib/log4j-1.2.15.jar:$ZOO/conf:$ZOO/lib/jline-0.9.94.jar org.apache.zookeeper.ZooKeeperMain -server $1:2181

crontab -e

15 * * * * java -cp $classpath:/usr/lib/zookeeper/lib/log4j-1.2.15.jar:/usr/lib/zookeeper/lib/jline-0.9.94.jar:/usr/lib/zookeeper/zookeeper.jar:/usr/lib/zookeeper/conf org.apache.zookeeper.server.PurgeTxnLog /var/zookeeper/ -n 5

--hbase

hadoop fs -mkdir /hbase

hadoop fs -chown hbase:hbase /hbase

crontab -e

* 10 * * * rm -rf `ls /usr/lib/hbase/logs/ | grep -P 'hbase\-hbase\-.+\.log\.[0-9]{4}\-[0-9]{2}\-[0-9]{2}' | sed -r 's/^(.+)$/\/usr\/lib\/hbase\/logs\/\1/g'` >> /dev/null &

<property>

<name>hbase.cluster.distributed</name>

<value>true</value>

</property>

<property>

<name>hbase.rootdir</name>

<value>hdfs://node1:9000/hbase</value>

</property>

<property>

<name>hbase.tmp.dir</name>

<value>/data0/hbase</value>

</property>

<property>

<name>hbase.zookeeper.quorum</name>

<value>node1,node2,node3</value>

</property>

如果需要限制ip

iptables -F

iptables -A INPUT -i lo -j ACCEPT

iptables -A INPUT -m state --state ESTABLISHED,RELATED -j ACCEPT

iptables -A INPUT -p icmp -m icmp --icmp-type any -j ACCEPT

iptables -A INPUT -i eth0 -p tcp --dport 22 -j ACCEPT;

iptables -A INPUT -i eth0 -p tcp -s 192.168.1.1 -j ACCEPT

iptables -A INPUT -i eth0 -p tcp -s 192.168.1.2 -j ACCEPT

iptables -A INPUT -i eth0 -p tcp -s 192.168.1.3 -j ACCEPT

iptables -A INPUT -i eth0 -p tcp -s 192.168.1.4 -j ACCEPT

iptables -A INPUT -i eth0 -p tcp -s 192.168.1.5 -j ACCEPT

iptables -A INPUT -j DROP

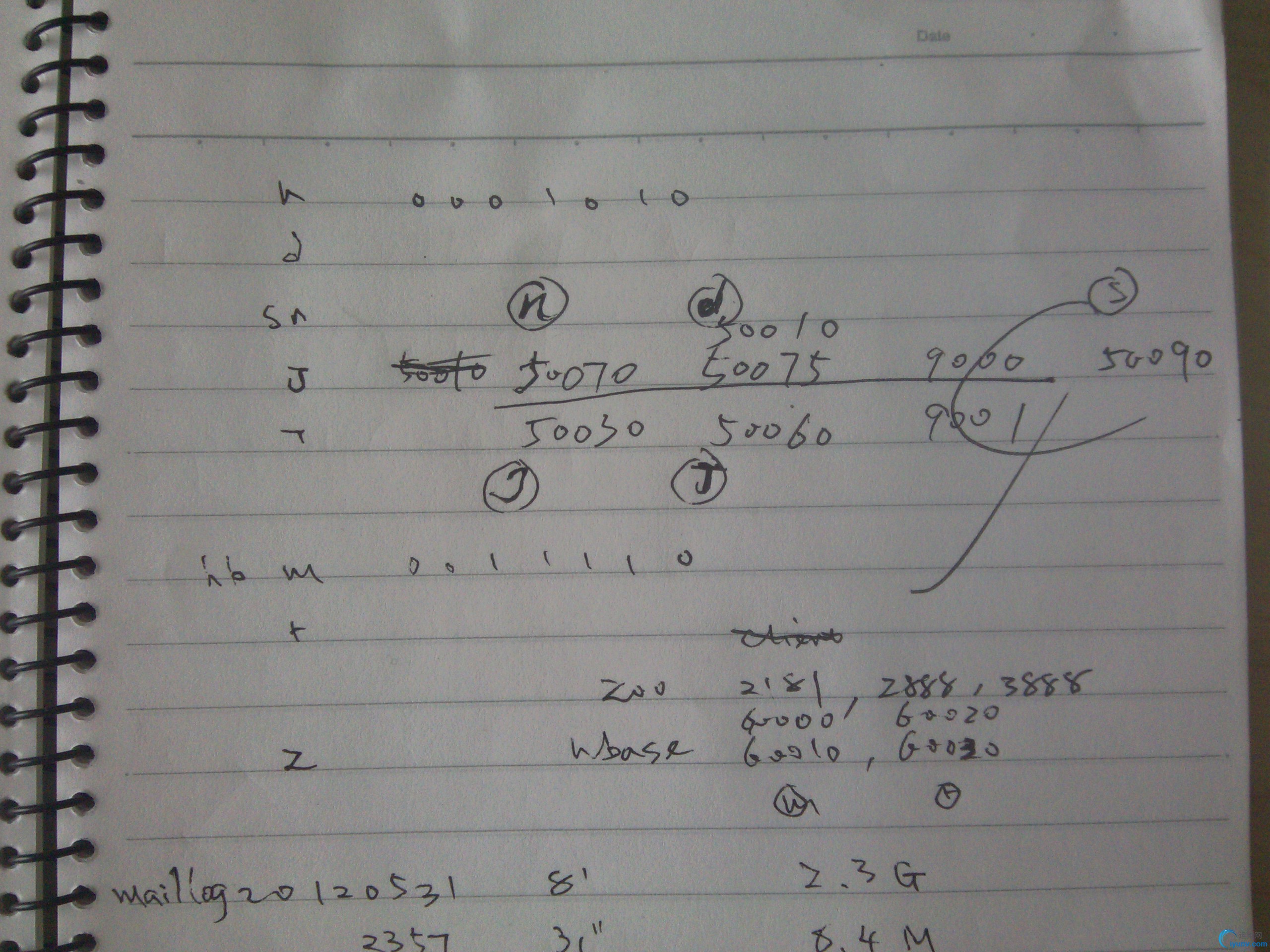

各服务的端口占用:

4.1.2

-core

<property>

<name>fs.defaultFS</name>

<value>hdfs://node0/</value>

</property>

-hdfs

<property>

<name>dfs.namenode.name.dir</name> <value>/data0/hadoop/dfs/name,/data1/hadoop/dfs/name,/data2/hadoop/dfs/name,/data3/hadoop/dfs/name,/data4/hadoop/dfs/name,/data5/hadoop/dfs/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>/data0/hadoop/dfs/data,/data1/hadoop/dfs/data,/data2/hadoop/dfs/data,/data3/hadoop/dfs/data,/data4/hadoop/dfs/data,/data5/hadoop/dfs/data</value>

</property>

<property>

<name>dfs.namenode.checkpoint.dir</name>

<value>/data0/hadoop/dfs/namesecondary,/data1/hadoop/dfs/namesecondary,/data2/hadoop/dfs/namesecondary,/data3/hadoop/dfs/namesecondary,/data4/hadoop/dfs/namesecondary,/data5/hadoop/dfs/namesecondary</value>

</property>

-mapred

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

-yarn

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce.shuffle</value>

</property>

<property>

<name>yarn.nodemanager.aux-services.mapreduce.shuffle.class</name>

<value>org.apache.hadoop.mapred.ShuffleHandler</value>

</property>

<property>

<name>yarn.log-aggregation-enable</name>

<value>true</value>

</property>

<property>

<description>Classpath for typical applications.</description>

<name>yarn.application.classpath</name>

<value>

$HADOOP_CONF_DIR,

$HADOOP_COMMON_HOME/*,$HADOOP_COMMON_HOME/lib/*,

$HADOOP_HDFS_HOME/*,$HADOOP_HDFS_HOME/lib/*,

$HADOOP_MAPRED_HOME/*,$HADOOP_MAPRED_HOME/lib/*,

$YARN_HOME/*,$YARN_HOME/lib/*

</value>

</property>

<property>

<name>yarn.resourcemanager.resource-tracker.address</name>

<value>node0:8031</value>

</property>

<property>

<name>yarn.resourcemanager.address</name>

<value>node0:8032</value>

</property>

<property>

<name>yarn.resourcemanager.scheduler.address</name>

<value>node0:8030</value>

</property>

<property>

<name>yarn.resourcemanager.admin.address</name>

<value>node0:8033</value>

</property>

<property>

<name>yarn.resourcemanager.webapp.address</name>

<value>node0:8088</value>

</property>

<property>

<name>yarn.nodemanager.local-dirs</name>

<value>/data0/hadoop/yarn/local,/data1/hadoop/yarn/local</value>

</property>

<property>

<name>yarn.nodemanager.log-dirs</name>

<value>/data0/hadoop/yarn/logs,/data1/hadoop/yarn/logs</value>

</property>

<property>

<name>yarn.nodemanager.remote-app-log-dir</name>

<value>/var/log/hadoop-yarn/apps</value>

</property>

<property>

<name>yarn.app.mapreduce.am.staging-dir</name>

<value>/user</value>

</property>

mkdir /data/hadoop

mkdir /data/hadoop/dfs; chown hdfs:hdfs /data/hadoop/dfs

mkdir /data/hadoop/yarn; chown yarn:yarn /data/hadoop/yarn

hadoop namenode -format

service hadoop-hdfs-namenode start

service hadoop-hdfs-secondarynamenode start

service hadoop-hdfs-datanode start

hadoop fs -mkdir /user/history /var/log/hadoop-yarn /tmp

hadoop fs -chmod 1777 /user/history /tmp

hadoop fs -chown yarn /user/history

hadoop fs -chown yarn:mapred /var/log/hadoop-yarn

service hadoop-yarn-resourcemanager start

service hadoop-yarn-nodemanager start

service hadoop-mapreduce-historyserver start

<property>

<name>yarn.web-proxy.address</name>

<value>host:port</value>

</property>

service hadoop-yarn-proxyserver start

tickTime=2000

dataDir=/data1/zookeeper

clientPort=2181

server.1=node1:2888:3888

server.2=node2:2888:3888

server.3=node3:2888:3888

service zookeeper-server init --myid=n

service zookeeper-server start

<property>

<name>hbase.cluster.distributed</name>

<value>true</value>

</property>

<property>

<name>hbase.rootdir</name>

<value>hdfs://node0:9000/hbase</value>

</property>

<property>

<name>hbase.zookeeper.quorum</name>

<value>node1,node2,node3</value>

</property>

hadoop fs -mkdir /hbase

hadoop fs -chmod hbase /hbase

service hbase-master start

service hbase-regionserver start

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://node0/metastore</value>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>hive</value>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>mypassword</value>

</property>

<property>

<name>datanucleus.autoCreateSchema</name>

<value>false</value>

</property>

<property>

<name>datanucleus.fixedDatastore</name>

<value>true</value>

</property>

<property>

<name>hive.metastore.uris</name>

<value>thrift://node0:9083</value>

</property>

<property>

<name>hive.support.concurrency</name>

<value>true</value>

</property>

<property>

<name>hive.zookeeper.quorum</name>

<value>node1,node2,node3</value>

</property>

/etc/default/hive-server2

export HADOOP_MAPRED_HOME=/usr/lib/hadoop-mapreduce

mysql -u root

CREATE DATABASE metastore;

USE metastore;

SOURCE /usr/lib/hive/scripts/metastore/upgrade/mysql/hive-schema-0.9.0.mysql.sql;

CREATE USER 'hive'@'metastorehost' IDENTIFIED BY 'mypassword';

REVOKE ALL PRIVILEGES, GRANT OPTION FROM 'hive'@'metastorehost';

GRANT SELECT,INSERT,UPDATE,DELETE,LOCK TABLES,EXECUTE ON metastore.* TO 'hive'@'metastorehost';

FLUSH PRIVILEGES;

sudo –u postgres psql

CREATE USER hiveuser WITH PASSWORD 'mypassword';

CREATE DATABASE metastore;

\c metastore

\i /usr/lib/hive/scripts/metastore/upgrade/postgres/hive-schema-0.9.0.postgres.sql

service hive-metastore start

service hive-server2 start

beeline

!connect jdbc:hive2://localhost:10000 metastore mypassword org.apache.hive.jdbc.HiveDriver |

|

|