|

|

http://sigizmund.com/debugging-hadoop-applications-using-your-eclipse/

Well, it can be annoying – it can be awfully annoying, in fact, to debug Hadoop applications. But sometimes you need it, because logging doesn’t show anything, and you’ve tried anything but still cannot get under the Hadoop’s cover. In this case, do few simple steps.

1. Download and unpack Hadoop to your local machine.

2. Prepare small set of data you’re planning to run the test on

3. Check that you actually can run Hadoop locally, something like this (don’t forget to set $HADOOP_CLASSPATH first!):

bin/hdebug jar yourprogram.jar com.company.project.HadoopApp

tiny.txt ./out

4. Go to Hadoop’s directory, and copy file bin/hadoop to bin/hdebug

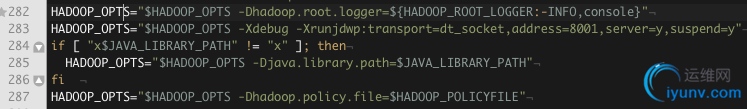

5. Now, we need to make Hadoop start in debug mode. What you should do is to add one line of text into the starting script:

[img]

[/img]

Yes, here’s it. Copy it from here:

-Xdebug -Xrunjdwp:transport=dt_socket,address=8001,server=y,suspend=y

What does it say basically is an instruction to Java to start in debug mode, and wait for socket connection of the remote debugger on port 8001; execution should be suspended after the start until debugger is connected.

Now, go and start your grid application like you did in step 3, but now use bin/hdebug script we’ve created. If you’ve done everything correctly, program should output something like this:

Listening for transport dt_socket at address: 8001

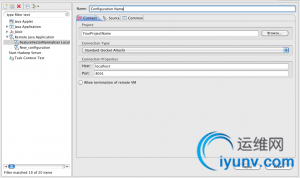

and wait for debugger. So, let’s get it some debugger then! Fire up your Eclipse with your project (likely you have it opened already since you’re trying to debug something) and add new Debug configuration:

[img]

[/img]

After you’ve set everything up, click “Apply” and close the window for now – probably, you’d want to set some breakpoints before starting the actual debugging. Go and do it, and then simply choose created debug configuration – and off you go! If everything worked properly, you should soon get a standard debugger window, with all the nice things Java can offer you. Hope it’ll help some of us in our difficult business of writing distributed grid-enabled applications!

Hive remote debugging

Figured out it is not very easy to debug the code, so here is a useful script we used to enable remote debugging in hive. We used Eclipse remote debugging with Hadoop 0.20.1 running in standalone method with Hive 0.5.0.

Please do remember to remove the extra lines that I had to add for formatting the script. Also, a better job can be done by using something like ‘for’ loop for getting all lib jars from Hadoop and Hive lib directory.

export HADOOP_HOME=/home/hadoop/hadoop-0.20.1

export HIVE_HOME=/home/hadoop/hive-0.5.0-bin

export JAVA_HOME=/usr/lib/jvm/java-6-sun

export HIVE_LIB=$HIVE_HOME/lib

export HIVE_CLASSPATH=$HIVE_HOME/conf:$HIVE_LIB/antlr-runtime-3.0.1.jar:$HIVE_LIB/asm-3.1.jar:

$HIVE_LIB/commons-cli-2.0-SNAPSHOT.jar:$HIVE_LIB/commons-codec-1.3.jar:

$HIVE_LIB/commons-collections-3.2.1.jar:$HIVE_LIB/commons-lang-2.4.jar:

$HIVE_LIB/commons-logging-1.0.4.jar:$HIVE_LIB/commons-logging-api-1.0.4.jar:

$HIVE_LIB/datanucleus-core-1.1.2.jar:$HIVE_LIB/datanucleus-enhancer-1.1.2.jar:

$HIVE_LIB/datanucleus-rdbms-1.1.2.jar:$HIVE_LIB/derby.jar:$HIVE_LIB/hive-anttasks-0.5.0.jar:

$HIVE_LIB/hive-cli-0.5.0.jar:$HIVE_LIB/hive-common-0.5.0.jar:$HIVE_LIB/hive_contrib.jar:

$HIVE_LIB/hive-exec-0.5.0.jar:$HIVE_LIB/hive-hwi-0.5.0.jar:$HIVE_LIB/hive-jdbc-0.5.0.jar:

$HIVE_LIB/hive-metastore-0.5.0.jar:$HIVE_LIB/hive-serde-0.5.0.jar:

$HIVE_LIB/hive-service-0.5.0.jar:$HIVE_LIB/hive-shims-0.5.0.jar:

$HIVE_LIB/jdo2-api-2.3-SNAPSHOT.jar:$HIVE_LIB/jline-0.9.94.jar:

$HIVE_LIB/json.jar:$HIVE_LIB/junit-3.8.1.jar:$HIVE_LIB/libfb303.jar:

$HIVE_LIB/libthrift.jar:$HIVE_LIB/log4j-1.2.15.jar:

$HIVE_LIB/mysql-connector-java-5.0.0-bin.jar:$HIVE_LIB/stringtemplate-3.1b1.jar:

$HIVE_LIB/velocity-1.5.jar:

export HADOOP_LIB=$HADOOP_HOME/bin/../lib

export HADOOP_CLASSPATH=$HADOOP_HOME/bin/../conf:$JAVA_HOME/lib/tools.jar:

$HADOOP_HOME/bin/..:$HADOOP_HOME/bin/../hadoop-0.20.1-core.jar:

$HADOOP_LIB/commons-cli-1.2.jar:$HADOOP_LIB/commons-codec-1.3.jar:$HADOOP_LIB/commons-el-1.0.jar:

$HADOOP_LIB/commons-httpclient-3.0.1.jar:$HADOOP_LIB/commons-logging-1.0.4.jar:

$HADOOP_LIB/commons-logging-api-1.0.4.jar:$HADOOP_LIB/commons-net-1.4.1.jar:$HADOOP_LIB/core-3.1.1.jar:

$HADOOP_LIB/hsqldb-1.8.0.10.jar:$HADOOP_LIB/jasper-compiler-5.5.12.jar:

$HADOOP_LIB/jasper-runtime-5.5.12.jar:$HADOOP_LIB/jets3t-0.6.1.jar:$HADOOP_LIB/jetty-6.1.14.jar:

$HADOOP_LIB/jetty-util-6.1.14.jar:$HADOOP_LIB/junit-3.8.1.jar:$HADOOP_LIB/kfs-0.2.2.jar:

$HADOOP_LIB/log4j-1.2.15.jar:$HADOOP_LIB/oro-2.0.8.jar:$HADOOP_LIB/servlet-api-2.5-6.1.14.jar:

$HADOOP_LIB/slf4j-api-1.4.3.jar:$HADOOP_LIB/slf4j-log4j12-1.4.3.jar:$HADOOP_LIB/xmlenc-0.52.jar:

$HADOOP_LIB/jsp-2.1/jsp-2.1.jar:$HADOOP_LIB/jsp-2.1/jsp-api-2.1.jar:

export CLASSPATH=$HADOOP_CLASSPATH:$HIVE_CLASSPATH:$CLASSPATH

export DEBUG_INFO="-Xmx1000m -Xdebug -Djava.compiler=NONE -Xrunjdwp:transport=dt_socket,address=8001,server=y,suspend=n"

$JAVA_HOME/bin/java $DEBUG_INFO -classpath $CLASSPATH -Dhadoop.log.dir=$HADOOP_HOME/bin/../logs

-Dhadoop.log.file=hadoop.log -Dhadoop.home.dir=$HADOOP_HOME/bin/..

-Dhadoop.id.str= -Dhadoop.root.logger=INFO,console -Djava.library.path=$HADOOP_LIB/native/Linux-i386-32

-Dhadoop.policy.file=hadoop-policy.xml org.apache.hadoop.util.RunJar $HIVE_LIB/hive-service-0.5.0.jar

org.apache.hadoop.hive.service.HiveServer

$JAVA_HOME/bin/java -Xmx1000m $DEBUG_INFO -classpath $CLASSPATH -Dhadoop.log.dir=$HADOOP_HOME/bin/../logs

-Dhadoop.log.file=hadoop.log

-Dhadoop.home.dir=$HADOOP_HOME/bin/.. -Dhadoop.id.str= -Dhadoop.root.logger=INFO,console

-Djava.library.path=$HADOOP_LIB/native/Linux-i386-32 -Dhadoop.policy.file=hadoop-policy.xml

org.apache.hadoop.util.RunJar $HIVE_LIB/hive-cli-0.5.0.jar org.apache.hadoop.hive.cli.CliDriver |

|